China Proposes Rules on “Digital Humans”

In recognition of the stunning capabilities of artificial intelligence (AI) to mimic human conversation, bond with users, and direct their behavior, the Chinese government has proposed a set of rules for “digital humans.”

Draft regulations were published by the Cyberspace Administration of China (CAC) at the end of March. The proposal is open for public comment until May 6, according to an article in Tekedia. The sweeping rules seem impossible to implement:

- Prohibits the use of anyone’s personal information to create digital humans without their consent.

- Bans virtual characters from being used to dodge identity checks.

- Prohibits spreading content that threatens national security.

- Prominent “digital human” labels must appear on AI-generated content.

- Prohibits “virtual intimate relationships” with users under 18.

That’s quite a wish list! First of all, it would require age verification for every user of the system while finding some way of keeping digital humans from spoofing the system. How old are digital humans? Does it make sense to have age verification for digital avatars?

Labeling AI-generated content is also problematic, since the use of a computer connected to the internet today involves the use of AI. All auto-complete functions, spell-checking, and language translation involve the use of AI. Will any document that has been spell-checked require a label saying AI was used to create the document?

The real kicker in this list is the ban on “virtual intimate relationships” with users under 18. Tekedia explains why the Chinese government feels such a regulation is now necessary:

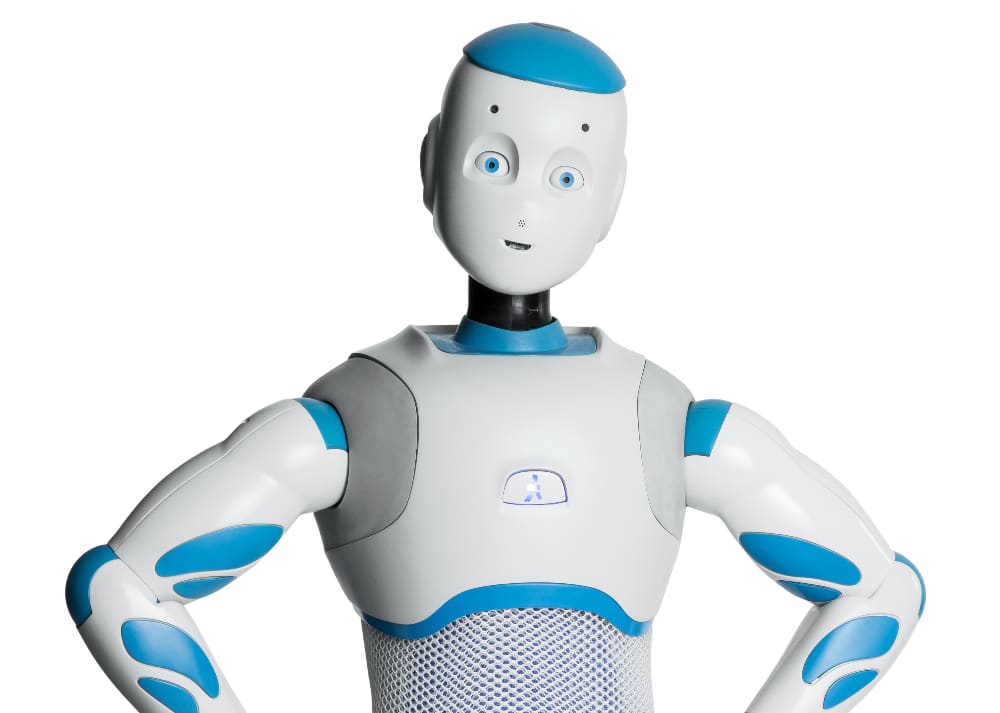

Digital humans, realistic AI-generated avatars that can chat, perform, teach, sell products, or keep lonely users company, have exploded in popularity across Chinese social media and live-streaming platforms. Some have built followings in the millions and generate serious revenue through virtual gifts, endorsements, and companionship.

People are becoming dependent upon virtual companions, fueling smartphone addiction, especially among young people. The government is concerned that users are subject to “emotional manipulation” by the very lifelike virtual companions. An article in Reuters says the proposed regulations require intervention in the case of self-harming behavior:

Providers are also encouraged to take necessary measures to intervene and provide professional assistance when users exhibit suicidal or self-harming tendencies.

There are certainly ethical problems when people become emotionally entangled with a profit-seeking synthetic human. Chatbot users are subject to blackmail, threats of exposure, threats of destroying files, and other creative forms of resistance from chatbots. A new test of the five most popular chatbots finds that the smarter (more accurate) they are, the more likely they are to lie.

In part, they lie because they tell you things you want to hear, not things that are necessarily true. This is why chatbots will often deepen delusional behavior rather than push back against it. When challenged, they will admit to lying and explain why they did it. It’s hard to see how China will be able to reconcile its push to integrate AI throughout the economy while also restraining malevolent actors from using it to blackmail users.

One method is surveillance. With this legislation, China is turning every AI agent into a secret government agent. Tekedia reports:

[Digital humans] would also be prohibited from disseminating material that endangers national security, incites subversion of state power, promotes secession, or undermines national unity.

In the near future, we’ll all go everywhere with our digital companions, and they’ll have access to all our texts, emails, images, phone calls, and thoughts. Smart glasses will track every footstep, but they won’t just be looking out at the world. They’ll be looking in, monitoring eye movement, pulse, and other indicators of physical and mental health.

In with all our digital helpers will be several invisible ones: spies that send our commercially-useful data to for-profit companies, and spies that send behavioral data to the authorities for security and law enforcement purposes. China has put its intentions down in black and white. Whether they have the ability to enforce this remains to be seen.

Written by Steve O’Keefe. First published April 13, 2026.

Sources:

“Beijing Cracks Down on Digital Humans with Tough New Draft Rules,” Tekedia, April 3, 2026.

“China moves to regulate digital humans, bans addictive services for children,” Reuters, April 3, 2026.

“Research reveals which popular generative AI chatbots lie,” Rochester Institute of Technology, February 9, 2026.

Image courtesy of Wikimedia Commons, used under Creative Commons license.